|

Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly.News about the dynamic, interpreted, interactive, object-oriented, extensible programming language Python Current Events It only takes one line of integration to its hardly disruptive. Plus, with the 1000 free API calls running an offer, you have almost nothing to lose by using our rotating proxy and comparing notes.

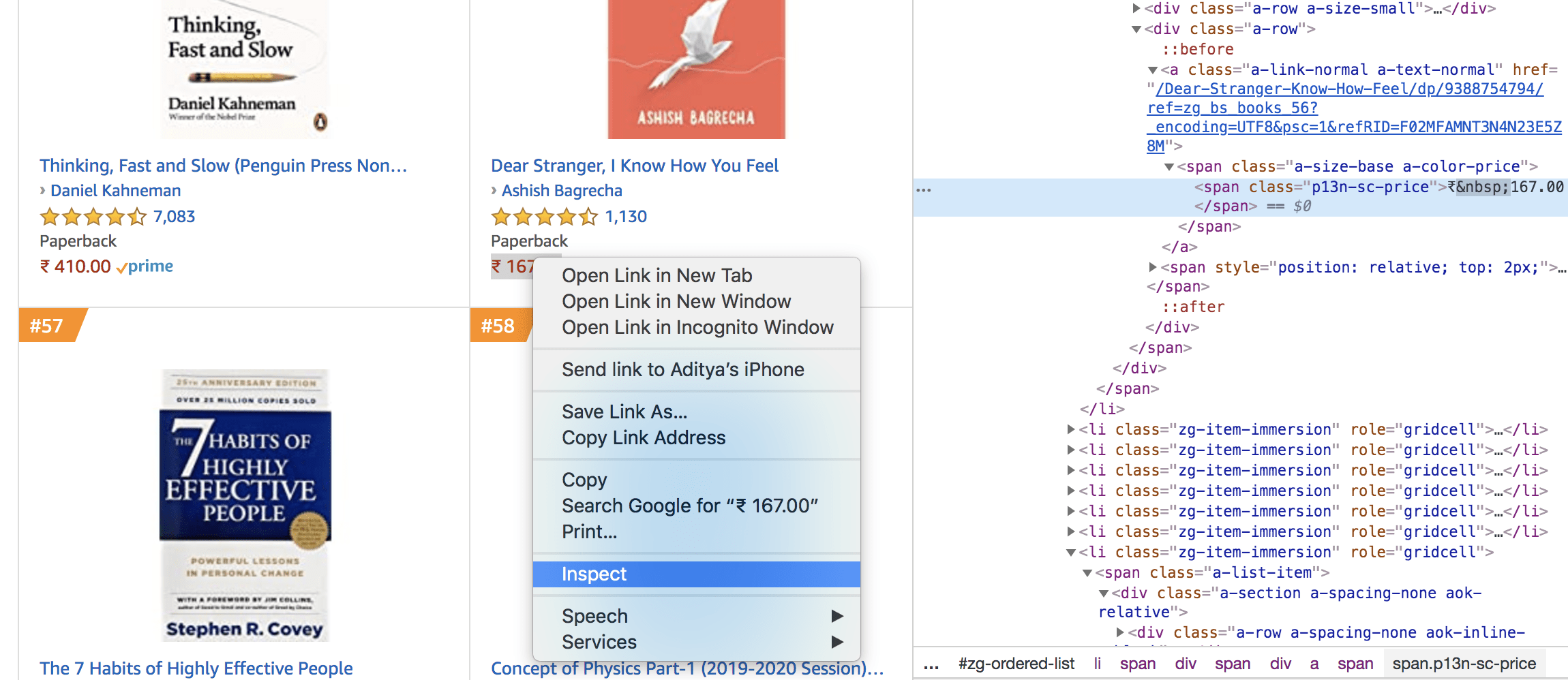

Investing in a private rotating proxy service like Proxies API can most of the time make the difference between a successful and headache-free web scraping project, which gets the job done consistently and one that never really works. It is a bummer, and this is where most web crawling projects fail. If we get a little bit more advanced, you will realize that Wikipedia can simply block your IP, ignoring all your other tricks. In more advanced implementations, you will need even to rotate this string, so Wikipedia can't tell it the same browser! Welcome to web scraping. It is done by passing the user agent string to the Wikipedia web server, so it doesn't block you. Web servers can tell you are a bot, so one of the things you can do is run the crawler impersonating a web browser. But if you try to scrape large quantities of data at high speeds, you will find that sooner or later, your access will be restricted. The example above is ok for small scale web crawling projects. When you run it now, it will keep all the images into the storage folder like so. Print('^^^ fetched image : ' response.url)įilename1 = 'storage/' ('/') Yield scrapy.Request(item, callback=self.parse_image) For that, we need to use the Request command fetch the photos one after another and extract the file name from the URL and save it into the local disk, as shown below. Scrapy runspider crawlImages.py -s USER_AGENT="Mozilla/5.0 (Windows NT 6.1 WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/.131 Safari/537.36" -s ROBOTSTXT_OBEY=False Let's save it as crawlImages.py and then run it with these parameters, which tells scrapy to disobey Robots.txt and also to simulate a web browser. Links = response.css('img::attr(src)').extract() It will give us the pictures and their captions. Titles = response.css('img::attr(alt)').extract() links = response.css('img::attr(src)').extract() We can just use the IMG tag and get the SRC attribute for the image path and the ALT attribute for the caption of the image if we want. Now let's see what we can write in the parse function. Here is where we can write our code to extract the data we want. The def parse(self, response): function is called by scrapy after every successful URL crawl. For us, in this example, we only need one URL. We need nyt.com as the images are stored there, as you will see below. The allowed_domains array restricts all further crawling to the domain paths specified here. Let's examine this code before we proceed. # -*- coding: utf-8 -*-įrom scrapy.spiders import CrawlSpider, RuleĪllowed_domains =

Once installed, we will add a simple file with some barebones code like so.

Now let's try and download all those interesting images that define the world every day.įirst, we need to install scrapy if you haven't already. Today lets look at how we can build a simple scraper to pull out and save all the pictures from a website like The New York Times. One of the most common applications of web scraping according to the patterns we see with many of our customers at Proxies API is scraping images from websites. Scrapy is one of the most accessible tools that you can use to scrape and also spider a website with effortless ease.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed